Last Updated on May 8, 2026, 2:03 pm ET

A January 2026 quick poll of ARL member libraries shows that the early enthusiasm about generative AI has largely settled into something more demanding: a field reckoning with governance gaps, uneven staff readiness, and the organizational conditions required to make AI use durable rather than merely interesting. Among 39 respondents, optimism remains high. But the dominant questions have shifted from what AI can do toward what libraries need to build if they want to engage it responsibly at scale.

Background

The poll ran from January 12 to 23, 2026, distributed to ARL member representatives. We received 43 responses; after removing 4 incomplete submissions, the analysis reflects 39 completed responses, approximately 31.2% of ARL’s 125 member libraries.

Among respondents, 29 were public university libraries, 8 were private university libraries, 1 was a federal library, and 1 was a public library. The pool skewed toward public research universities while still capturing private, federal, and public ARL members.

These findings should be read as a directional snapshot. Selection bias is likely: institutions already more engaged with AI probably responded at higher rates. The results represent a meaningful signal about engaged institutions, not a statistically representative picture of the full membership.

A Community That Remains Optimistic but Wants Practical Answers

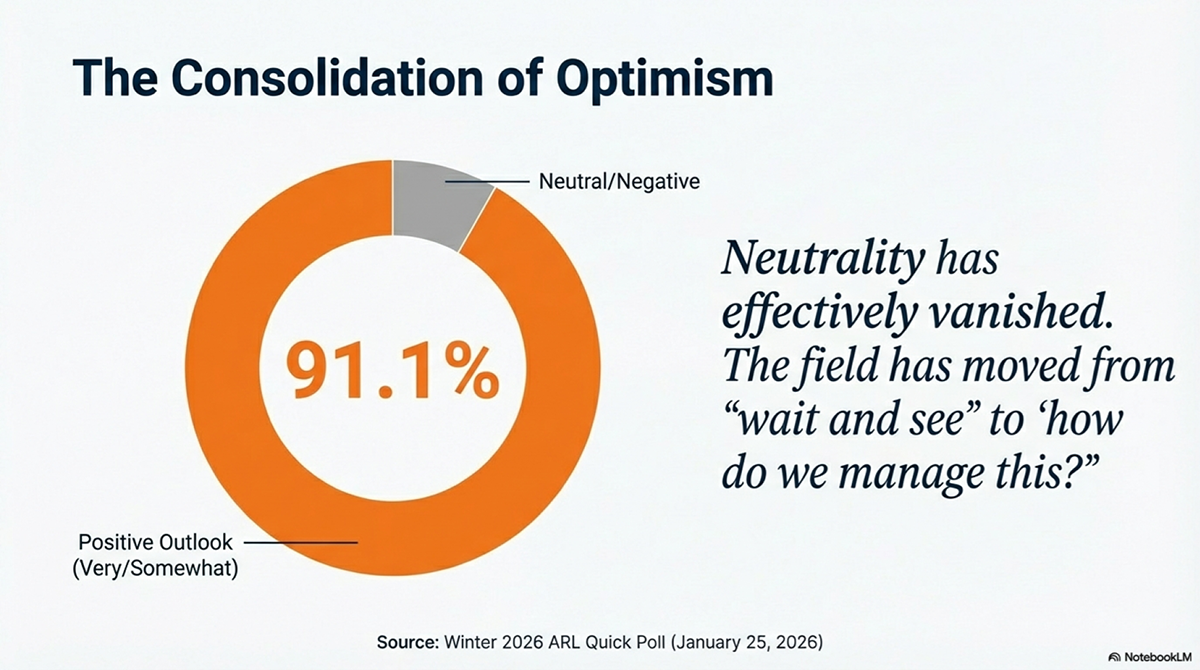

Sentiment toward generative AI stayed strongly positive. Of 39 completed responses, 36 registered as somewhat or very positive. Only 2 respondents selected neutral, and 1 selected somewhat negative. No respondent registered as very negative.

That distribution is notable not because optimism is surprising, but because of what coexists with it. The same respondents who expressed positive views consistently identified governance gaps, uneven staff readiness, and unclear institutional roles as their most pressing challenges. Optimism and anxiety about organizational capacity appear in the same institutions, often in the same responses. Libraries are not waiting for confidence to arrive before they act; they are acting and hoping the organizational infrastructure catches up.

Implementation Is Outpacing Governance

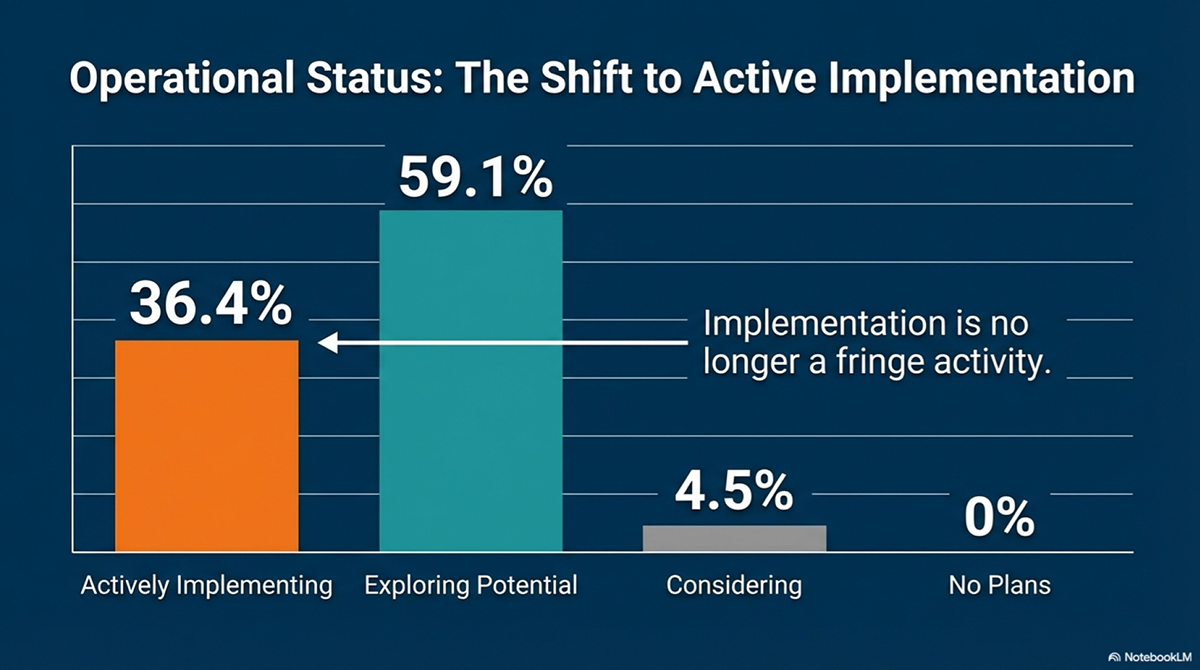

Most respondents reported that their libraries remain in an exploratory phase, but more than one-third indicated active implementation. That gap between exploration and implementation matters less than the governance gap it reveals.

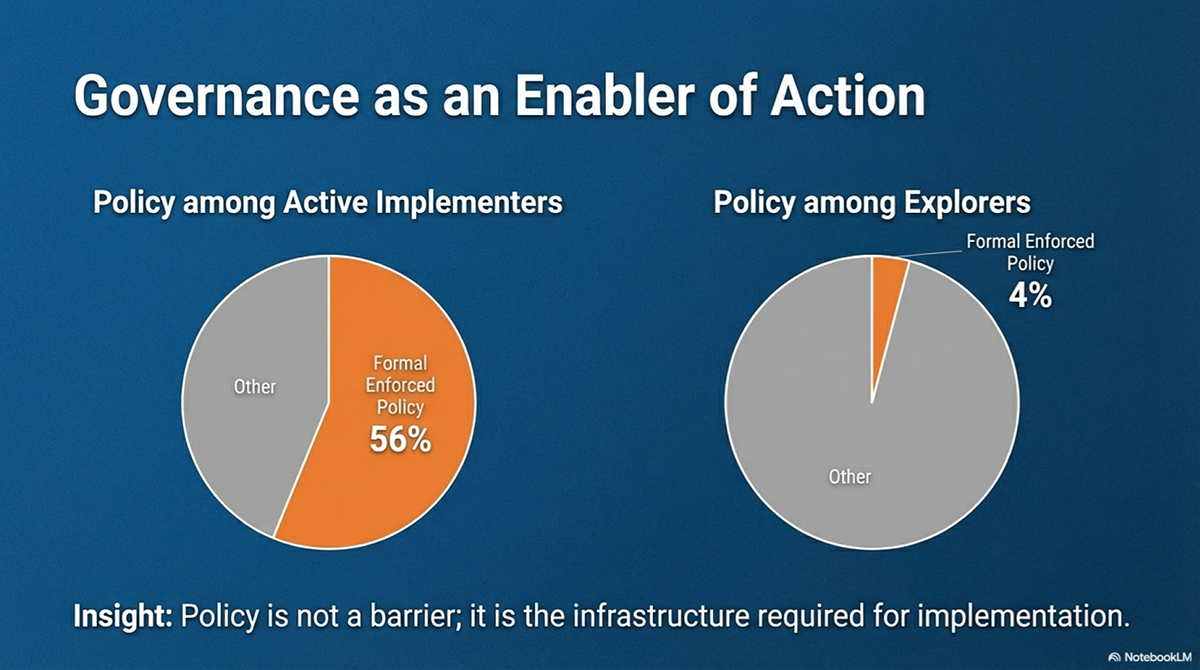

The poll found that only a minority of respondents reported formal enforced policies for generative AI. A larger share described informal guidance or policies still under development. Libraries actively implementing AI were far more likely to report formal governance structures than those still exploring. Put differently: libraries that have moved further into adoption have built more governance. But many libraries doing substantial AI work have not yet defined shared expectations around acceptable use, privacy, human oversight, or accountability.

This means libraries are making real decisions about tools, workflows, and use cases before they have established the frameworks that should govern those decisions. As AI becomes more embedded in research support, instruction, and operations, the cost of that gap will grow. Governance built after the fact tends to accommodate the practices already in place rather than shape them.

Staff Attitudes: The Most Telling Signal in the Poll

The staff attitudes data deserves more attention than a single section typically receives, because it reveals something about organizational health that the other findings only hint at.

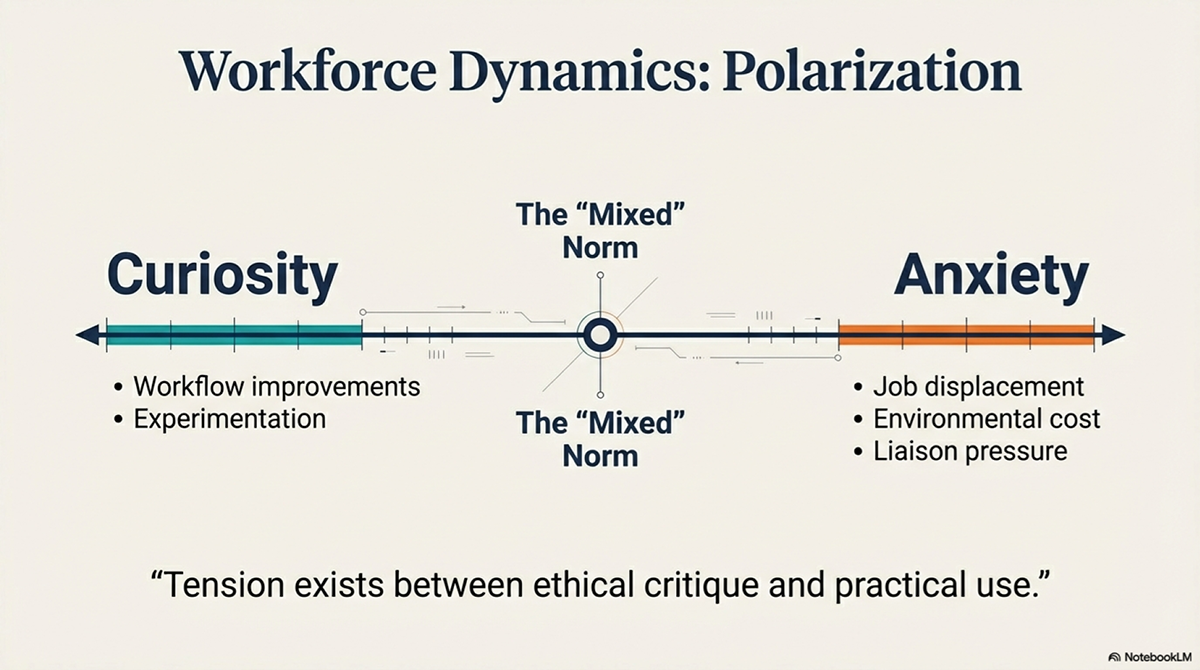

Respondents described staff attitudes as occupying a wide spectrum. Curiosity and enthusiasm appeared alongside skepticism, caution, and concern about what AI might mean for professional expertise and job security. Several responses noted that staff are not dividing cleanly into supporters and opponents. The more common pattern was variation within teams: some people eager to experiment, others cautiously interested, others uneasy about whether AI will change expectations for their work or erode the value of what they currently do well.

Staff attitudes reflect the organizational conditions libraries have created: how clearly leadership has communicated direction, how much protected time and structured learning staff have access to, and whether experimentation feels safe or feels like an audit. Where staff are uncertain or resistant, the question worth asking is not how to convince them but what conditions are missing.

Several responses also noted environmental and ethical concerns as distinct from concerns about job displacement. A meaningful subset of staff appear to be skeptical of AI on principled grounds, not just practical ones. Libraries that treat this as a communication problem to manage will handle it less well than those that treat it as a legitimate value question worth engaging directly.

Organizational readiness for AI depends heavily on this dimension. Tools and pilots can be stood up quickly. The internal conditions that help staff engage AI with confidence and sound judgment take longer to build, and no amount of enthusiasm at the leadership level substitutes for them.

What Library Leaders Say They Need

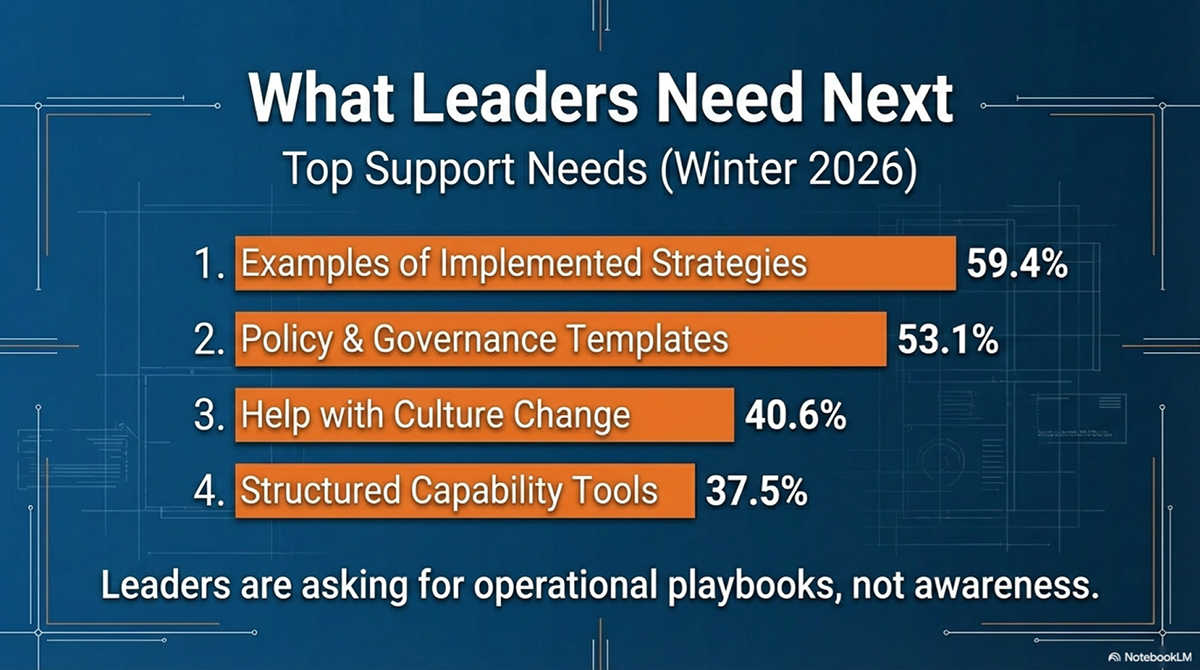

When asked what support would be most useful over the next 12 months, respondents pointed to four categories with particular consistency: practical examples of implemented AI strategies, governance templates they can adapt rather than build from scratch, support for organizational culture change, and structured tools for assessing institutional AI readiness.

The fourth category warrants emphasis. Interest in diagnostic frameworks and readiness assessment tools appeared across multiple responses, suggesting that library leaders want structured ways to understand where their organizations actually stand before committing to particular implementation paths. This is a different kind of need than asking for use-case examples or policy templates. It reflects awareness that libraries vary considerably in their current capacity, and that the advice useful to an institution with a mature AI working group and formal governance differs substantially from the advice useful to one just beginning to define its approach.

Notably absent from the responses: requests for more AI tools themselves, or for expanded vendor access. The expressed needs were organizational and intellectual, not technological. Leaders appear less constrained by the available tools than by the frameworks, capacity, and shared understanding needed to use those tools well.

The responses also showed strong interest in peer exchange, particularly candid conversation with institutions at similar stages rather than aspirational benchmarking against the most advanced programs. Libraries want to learn from peers navigating the same problems, not just from showcases of what the best-resourced institutions have built.

Where the Library’s Role Remains Contested

The poll’s optimism about library relevance should be read against an important structural reality: libraries are not the only campus actors claiming authority over how AI gets used in research and learning. In most institutions, IT organizations, provosts’ offices, faculty senates, and research offices are simultaneously developing their own frameworks, policies, and priorities. The library’s role as convener, evaluator, and steward of information is a claim, not a settled outcome.

Respondents described libraries as educators, evaluators, implementers, and institutional partners. Several noted the library’s potential role in campus AI governance, AI literacy instruction, and research support redesign. That ambition is appropriate. But it requires libraries to articulate what they uniquely contribute and to build relationships with the campus actors who will decide whether the library’s expertise matters to their AI-related work.

The library’s comparative advantage sits in a specific cluster: expertise in information evaluation, provenance and bias, ethical reasoning about knowledge systems, and cross-campus coordination across disciplines. Those capabilities are genuinely distinctive. But they require active positioning. Libraries that assume relevance will find themselves consulted late, if at all. Libraries that invest in making the case will find more doors open.

What Has Shifted Since ARL’s 2025 Poll

Compared with the 2025 quick poll, the 2026 responses show a shift in emphasis rather than a change in overall sentiment. The 2025 findings framed the field as moving from early interest toward strategic action. That framing still holds. What the 2026 data adds is a clearer picture of what strategic action actually requires.

In the earlier poll, AI was emerging as an important topic. In the 2026 responses, it appears more concretely tied to ongoing operational questions: which service models need to change, what staff development looks like in practice, how governance should develop alongside adoption rather than after it, and where libraries can make a distinctive contribution rather than simply participate in campus-wide conversations. The 2025 poll identified a moment; the 2026 responses describe the work.

Because the two quick polls do not represent a matched set of institutions or respondents, this comparison is directional rather than definitive. Even so, the direction is clear enough to be useful.

Priorities for Research Libraries

The poll findings point to six areas where research libraries stand to make the most consequential investments.

1. Build governance alongside adoption, not after it.

The poll’s clearest finding is the governance gap: many libraries are making significant decisions about AI tools, workflows, and use cases before they have established shared expectations around acceptable use, privacy, and accountability. Libraries with formal governance were significantly further along in implementation. Closing this gap means treating governance as infrastructure, not compliance.

2. Treat staff readiness as the central implementation variable.

The staff attitudes findings suggest that the distance between institutional interest in AI and sustainable AI use runs directly through workforce capacity and organizational culture. Structured staff development, protected time for guided experimentation, and direct engagement with principled concerns will matter more than additional pilots.

3. Assess institutional readiness before expanding implementation.

Respondents consistently requested structured tools for diagnosing where their organizations actually stand. The expressed appetite for readiness assessment reflects wisdom: libraries that understand their current state make better decisions about next steps.

4. Formalize AI literacy as a library service, not a pilot.

AI literacy appeared across more responses than any other specific service area, including discovery, metadata, and research support. Libraries that treat it as a sustained service, with dedicated staff capacity and integration into existing instruction programs, will find it easier to establish the institutional relationships that make broader partnership possible.

5. Make strategic choices about where to lead and where to partner.

The poll shows libraries seeing opportunities across research support, discovery, metadata, collections, AI literacy, and campus governance. Not every library will pursue all of these equally, and trying to do so risks spreading effort too thin to build depth anywhere. Clear choices about where to lead, where to collaborate with campus partners, and where to defer to others will help libraries concentrate their capacity on areas of genuine comparative advantage.

6. Articulate the library’s distinctive contribution explicitly.

As AI becomes more embedded in research and learning, the library’s role will be shaped by how well it can explain what it uniquely offers. The capabilities that most distinguish libraries from other campus AI actors, including expertise in information evaluation, provenance, bias, ethical reasoning, and cross-disciplinary coordination, require active articulation. Relevance that goes unstated tends to go unrecognized.

What This Quick Poll Suggests

The January 2026 poll captures a field that has passed through the early enthusiasm phase and arrived at a harder set of questions. Among responding institutions, generative AI commands broad attention and strong optimism. The challenge is not convincing libraries that AI matters. The challenge is building the governance structures, staff capacity, and institutional positioning that make engagement durable.

The most important insight from this round of data may be the relationship between governance and implementation. Libraries that have invested in governance structures have moved further into implementation. Libraries that have not invested in governance are making significant operational decisions in a policy vacuum. That gap will become harder to close as AI use becomes more normalized and embedded in existing workflows.

The staff attitudes findings add a dimension that aggregate data tends to flatten: the people doing this work hold a wide range of views, including legitimate principled concerns that deserve substantive engagement rather than change management. Libraries that invest in the organizational conditions supporting thoughtful AI use will outperform those that invest primarily in tools and access.

The next phase of AI in research libraries will likely be defined by prioritization, governance development, and clearer choices about institutional role. The libraries best positioned for that phase are those that have already started treating AI as a strategic infrastructure question, not a technology question with a strategic veneer.